From convenience to choice: how do we remain authors of our work in the world of AI?

TL;DR

- AI is spreading fast because it's convenient, but convenience has a hidden cost: when you let it make the small decisions, you stop being the author of the work and become an editor of what a machine guessed at.

- The tools work best when they sit inside your iteration loop (like Vizcom) rather than replacing the thinking with a "magic wand" — the thinking is the point.

- Generative AI also carries documented biases and quietly narrows the range of ideas across a population, so staying an author means having your own vision clear before you prompt, not after.

From convenience to choice: how do we remain authors of our work in the world of AI?

Less than 25% of the global population have interacted with AI.

Already, 25% of the global population have interacted with AI.

Framing that statement makes a huge difference to how you perceive this rapidly developing technology. But to believe AI isn't going to drastically change the way we live and work feels more and more naive.

25% of the global population equates to 2 billion people, mostly young people and professionals. This technology is developing at such a rate that it won't be long before almost every business has to make a decision about how, not whether, they adopt it. So even if individuals have no interest in opening ChatGPT, AI will soon be embedded into the services they use on a daily basis, or will at least be a key tool in how those services were built.

The pull of convenience

Some people worry about the environmental impact of AI, but the concern rarely survives contact with the tools. You try it to see if it's useful. A few months later you're reliant on Claude for spreadsheets and ChatGPT for copy, and the impact question feels very far away. Meanwhile businesses are being told by investors they have no choice but to build AI into their product - stay ahead or fall behind - with barely a thought for what it does to their users.

It's like a drug whose side effects we haven't worked out yet. We'll worry about those later. Right now we're addicted, and what's the addictive aspect of it? Convenience.

If you've ever picked up an old Nokia 3310, or booted up the PlayStation 1 you found in your parents' attic, you know how quickly nostalgia turns into frustration. Technology has advanced enormously over the past three decades, but mostly in small steps. AI feels like a giant leap.

ChatGPT became the fastest growing app of all time - 100 million users in two months - initially because of the novelty, but it has kept users because of convenience. Unlike Google, you can search how you speak and get tailored answers that feel accurate and trustworthy, even when they aren't. It can edit articles, draft emails, and design a party invitation in seconds.

On the visual side, if you haven't seen the Will Smith Eating Spaghetti test, it's worth a look. Videos of Will Smith eating spaghetti, generated by the best tools of each passing year, chart the jump from dystopian nightmare to something a lot of people can no longer tell from reality. The technology now lets people who've never learned to code, edit video, animate or CAD do all of those things by typing prompts into a chat box.

AI speeds up productivity - at what price?

Back in 2022 when Midjourney came out, we started experimenting with turning design ideas into visuals. It was hit and miss, mostly miss. There were limited ways to tailor the output, post-editing was almost impossible, and it was so obviously biased it got boring fast. Ask it for an Asian woman wearing a backpack and she'd turn up as some sexy manga character. A year later we'd all cancelled our accounts.

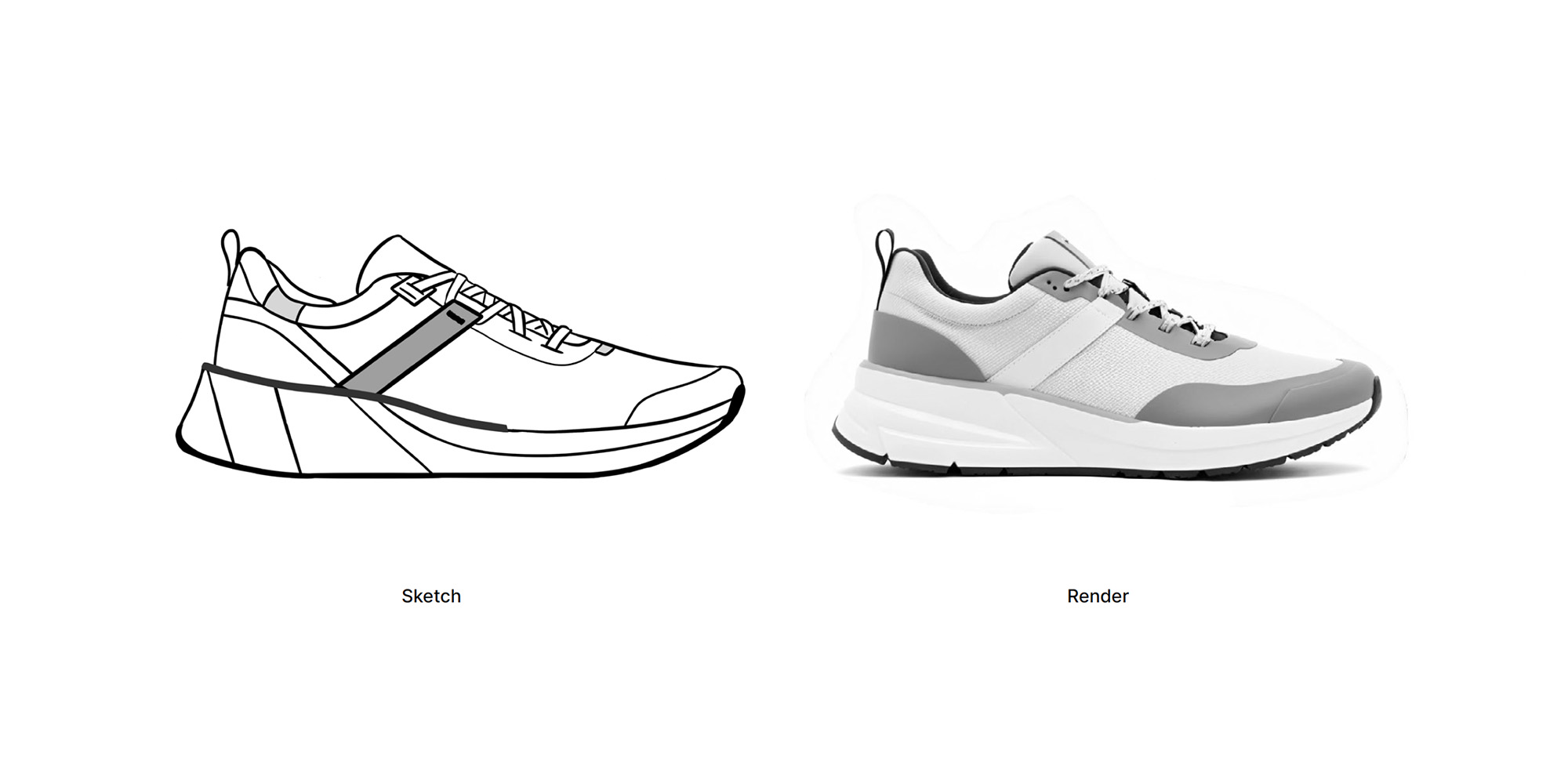

Two years on, working on a shoe design, we leveraged Vizcom to help visualise ideas. Vizcom is built to sit inside the creative process, and it can now turn a sketch into a 3D CAD model. We could go from sketch to rendered concept, with colour and material, ten times faster than with traditional tools.

Were we overjoyed? No. It took the fun out of design.

That sounds flippant, but I don't think it is. Fun, in creative work, is shorthand for the hundred small decisions you make on the way to an outcome - the ones that add up to a point of view. Taste. Judgement. Authorship. The thing a client is actually paying for. When you hand those decisions to a tool, you don't just speed up the work. You thin it out. You become an editor of something a machine guessed at, rather than the author of something you meant.

In 2025 we experimented rendering sneakers using Vizcom using simple sketches. Whilst the potential to speed up workflow was impressive, the deliberate decisions on detailing was taken away and the result felt hollow.

So is the future no fun?

Not quite. But I think the trade is more uneven than we're admitting.

I'm not particularly adept at Excel, so Claude is phenomenal at helping me with spreadsheets. I'm much more experienced in building products with complex fluid surfaces in Solidworks - refining them endlessly, getting them production-ready. The Claude integration into OnShape, another CAD program, which can build basic solid models, is intriguing, but doesn't solve a problem that I have.

However, it would be foolish to think the technology stops here. So let's assume that, in time, AI will CAD better than I can.

Here's the pattern I keep noticing: AI is genuinely useful where I have no expertise to lose, and genuinely threatening where I do. Not because it's better than me yet, but because using it means not doing the thinking myself. And ‘the thinking’ is the point. The fluid surface I model by hand isn't just a shape - it's hours of small decisions that give me a complete understanding of the object I have built.

What needs to happen alongside more capable AI is an ability to test, tweak and refine. Midjourney in 2022 was a fruit machine - you popped in your penny, pulled the lever, and had almost no control over what came out. Tools like Vizcom are better because they sit inside the iteration loop rather than replacing it. In our book, the best tools are just that: tools. They support the creative process rather than getting creative themselves and taking the act of critical thinking away. But there are plenty of AI products that are less ‘tool’ and more ‘magic wand’. And if you have no vision of what you want to make, AI will happily make something for you. But this shift from human creation to machine creation is a quiet and yet hugely important shift.

The invisible cost

When I push Claude to draft something for me, the version that comes back is almost always competent and always not quite right. Fixing it often takes about as long as writing it from scratch would have taken - though starting with a blank page always seems more intimidating. But the real cost is subtler. I've anchored myself to someone else's first idea before I've had my own.

Multiply that across a generation of designers, writers, founders, students, and the question stops being "is AI useful?" and starts being "who are we becoming when we use it like this?" A population of editors rather than authors. People who can tell you what's wrong with a thing but have lost the muscle for starting one.

And it's worse than that, because the "someone else" you're anchoring to isn't neutral.

The biases you inherit

Every generative AI tool carries the biases of the data it was trained on, and those biases are now well-documented. Bloomberg's 2023 analysis of Stable Diffusion found that the model depicted high-paying roles as overwhelmingly white and male - doctors, lawyers, CEOs - while overrepresenting darker skin tones in low-paying occupations. It generated images of fast-food workers as people of colour 70% of the time, even though 70% of US fast-food workers are white. A 2024 Washington Post investigation found similar tools defaulted to "African men in Western coats standing in front of thatched huts" when asked to depict wealthy people in African countries - transplanting Western stereotypes onto basic prompts about the rest of the world. Peer-reviewed research in Scientific Reports last year documented what the authors called "racial homogenisation": Middle Eastern men rendered as near-identical bearded figures in traditional dress; women across professions sexualised in ways their male counterparts weren't.

The same thing happens in text. A study published in 2024 compared 2,200 college admissions essays - some written by humans, some by GPT-4 - and found that each additional human essay added more new ideas to the collective pool than each additional AI essay did. Relying on these tools means we are converging on narrower ranges of ideas than if we were working alone. The individual output looks creative; the population of outputs quietly flattens.

As a designer, bias is the part I find hardest to factor in, because bias in an AI tool doesn't arrive labelled. It arrives as a competent render, a plausible paragraph, a reasonable first draft. Even if you do recognise it, then how do you prompt against it? Most people don't. Most people take the first plausible output and move on, unwittingly becoming an amplifier of these biases.

Staying the author

It would be hypocritical to argue for abstinence. I have integrated AI into several aspects of my job from carrying out research to building dashboards that improve workflow. But staying the author of your work in this environment is going to take more than using AI "thoughtfully". We have to have the vision clear in our own head so that we can recognise when we are being pulled off course, and remind ourselves that these tools are biased and not blindly accept what it produces - choosing, case by case, which decisions are ‘the work’ - and holding onto them.

That's the shift I think we're underestimating. Not whether AI changes our work, but whether we remain the authors of it.